Production-ready n8n MCP servers need a gateway

n8n's MCP Server Trigger ships with a bearer token and per-workflow opt-in. Six months later you have ten endpoints, one token, and no idea who calls what.

You added n8n's MCP Server Trigger to a workflow. You set a bearer token. You exposed the endpoint through your tunnel and pointed an AI agent at it. A month later there are six MCP endpoints behind that one token, nobody is sure who calls what, and a quiet voice asks what happens when a prompt tells the agent to call delete_customer.

An n8n MCP server gives you the protocol. It doesn't give you the rest. Below are five concrete failure modes the bearer token doesn't cover, with reproducible shapes for each, and the gateway-side config that closes them.

What n8n's MCP Server Trigger gives you out of the box

The MCP Server Trigger node is straightforward. You drop it at the top of a workflow, set a path, optionally set authentication, and the workflow becomes an MCP endpoint. Two auth options ship: Bearer (the client sends Authorization: Bearer <token>) and Header (you choose a header name and required value). Both are static credentials baked at workflow build time.

For free you get:

- Per-workflow opt-in. A workflow has to enable MCP exposure explicitly; nothing is exposed by default.

- Workflow-level execution logs. Each tool call is captured as an n8n execution.

- A canonical

tools/listresponse built from the trigger's input schema.

What you don't get is the production envelope around that. The n8n MCP Server Trigger docs defer rate limiting to "your reverse proxy" and don't cover authentication beyond static tokens. There's a community feature request from March asking for OAuth, with no staff response yet.

A canonical call looks like this:

curl -fsS https://n8n.example.com/mcp/orders/sse \

-H "Authorization: Bearer ${N8N_MCP_TOKEN}" \

-H "Accept: text/event-stream"

That bearer is the entire access control surface. Whoever has it can call every tool the workflow exposes.

Scenario 1: one bearer token, every tool

Your workflow exposes three tools: search_customer, get_invoice, delete_customer. The first two are safe for a frontend agent that helps support reps. The third belongs to one tightly-controlled internal flow.

n8n has no notion of per-tool authorization. The bearer authorizes the connection; once accepted, every tool the workflow declares is callable. There's no field on the trigger node for "tool X requires elevated credentials".

You might think the MCP client in n8n could narrow this by passing a different token per tool. It can't, today. Issue #23421 is closed-stale on a bug where the n8n MCP client drops the Authorization header entirely when tool selection is set to anything other than "all". The granular path doesn't work even when you'd want to use it.

A gateway in front replaces the bearer with a key model where each key carries explicit per-tool tags:

# Mint a key for the support frontend: search_customer + get_invoice only.

curl -fsS -X POST "${AIRONCLAW_URL}/api/keys" \

-H "Authorization: Bearer ${PAT}" \

-H "Content-Type: application/json" \

-d '{

"name": "support-frontend",

"mcpPermissions": [{

"id": "<n8n-mcp-uuid>",

"tools": ["search_customer", "get_invoice"]

}]

}'

The minted key carries mcp:<uuid>:tool:search_customer and mcp:<uuid>:tool:get_invoice tags. A tools/call for delete_customer returns 403 at the gateway, before the request ever reaches n8n. The internal flow uses a separate key with mcp:<uuid>:tool:*.

Scenario 2: an open shell with no rate limit

Your n8n cloud instance charges per execution. Your self-hosted instance has a finite worker pool. Either way, an MCP endpoint with no upstream-side rate limit is a denial-of-wallet (cloud) or a denial-of-service (self-host) waiting for a misbehaving agent.

The n8n docs say it plainly: rate limiting is not a feature of the MCP Server Trigger node. Their recommendation is nginx in front. That's reasonable if you have one, but on its own nginx caps an IP, not a consumer. A single API key with a 1000 RPS loop looks identical to legitimate traffic from the same IP.

A gateway-side rate_limit rule keys on the API key, with a ban window for repeat offenders:

{

"rule_type": "rate_limit",

"tools": ["*"],

"name": "per-key-default",

"match_key": "api_key",

"threshold": 60,

"timespan": 60,

"ban_after_n_exceeded": 20,

"ban_timespan": 600

}

This caps every API key at 60 calls per minute across all tools on the proxy. After 20 over-threshold requests inside that window, the key is banned for 10 minutes. The ban short-circuits in the access phase before n8n is touched. Tighten by tool with tools: ["delete_customer"] and a smaller threshold for the destructive ones; keep the looser ["*"] rule alongside as a safety net.

Scenario 3: a tool returns a customer record. The LLM logs it.

An agent calls get_invoice. Your workflow returns the customer record: name, email, last-four of the card. The agent passes that response back to the LLM, which writes it into its conversation context. The conversation log is stored. The LLM trace is shipped to whatever observability tool you use. Your DPO finds out three months later from a customer complaint.

n8n has no opinion on what's in the response body. The MCP server returns whatever the workflow built. You can write a workflow node that strips fields, but that's per-workflow plumbing, easy to forget on the next workflow you ship, and impossible to audit centrally.

A gateway-side response_replace rule is a regex applied to the response body before it leaves the gateway:

{

"rule_type": "response_replace",

"tools": ["get_invoice", "search_customer"],

"pattern": "[A-Za-z0-9._%+-]+@[A-Za-z0-9.-]+\\.[A-Za-z]{2,}",

"replacement": "[email]",

"regex_flags": "i",

"dlp_rule_id": "email_v1"

}

Email addresses become [email] before the agent sees them. Add a second rule for card-shaped digit runs (\b(?:\d[ -]*?){13,16}\b → [card]), a third for any internal identifier you don't want leaving the perimeter. The rule applies at the gateway, so a new workflow that exposes get_invoice inherits the policy automatically.

Scenario 4: the same answer, computed a thousand times

Half the n8n MCP workflows people ship are lookup-shaped: a query, an upstream call, a response. The query is get_pricing("acme-pro"). The response is { "tier": "pro", "cents": 4900 }. It changes once a quarter.

The agent doesn't know that. It calls get_pricing("acme-pro") every time it answers a question about the Pro tier. n8n executes the entire workflow every time. If the upstream is paid (Stripe, a SaaS vendor, an LLM call inside the workflow), every cache miss is real money plus the latency of a round trip.

A gateway-side static_cache rule keys the response on the JSON-RPC method plus the canonical hash of the parameters:

{

"rule_type": "static_cache",

"tools": ["get_pricing", "get_customer_tier"],

"cache_ttl": 600,

"cache_isolation": "per_identity"

}

per_identity is the safe default: each API key gets its own cache slot, so one tenant's lookup never serves another tenant's query. A 10-minute TTL on a quarterly-changing value is conservative; the only thing it costs is a 10-minute lag after a price change. The cache is per tool, so destructive or interactive tools (delete_customer, chat) stay un-cached.

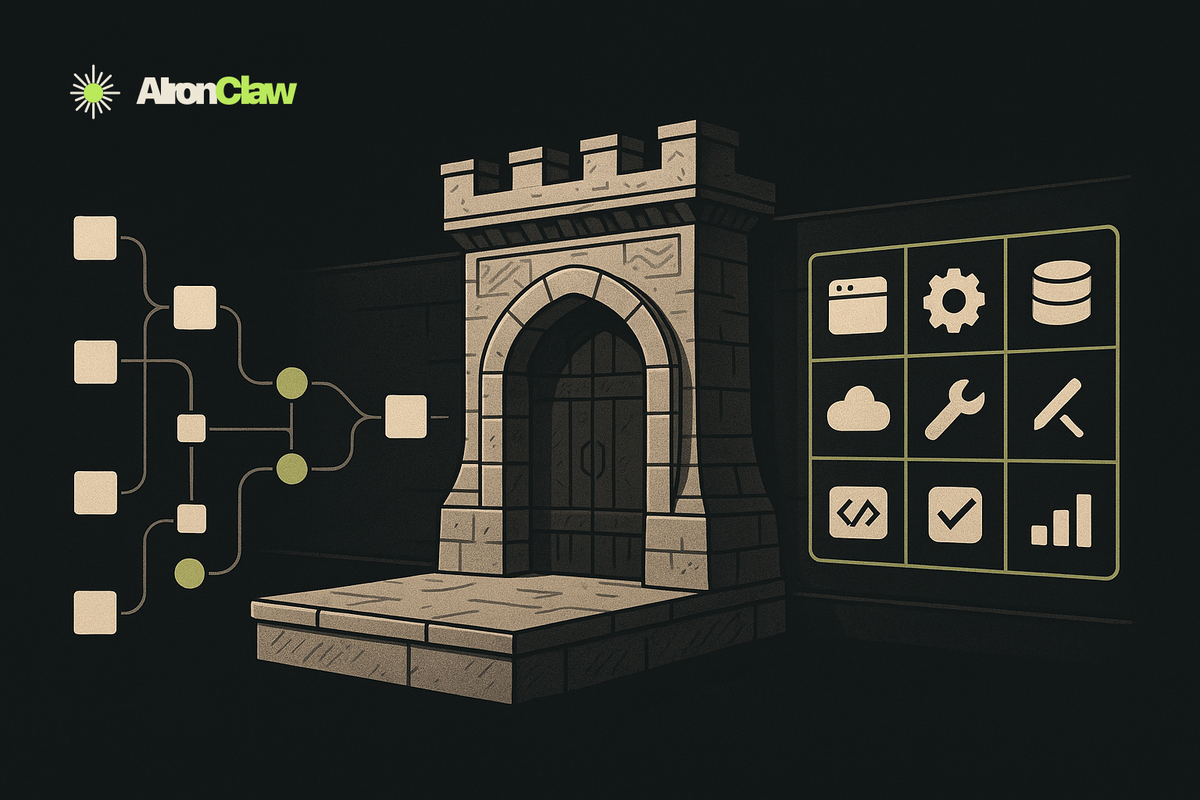

Scenario 5: your n8n MCP gains tools you didn't write

Your n8n instance exposes three workflows. You also want the agent to do web search and to read a public docs site. Writing those as n8n workflows is overkill: you'd be wrapping httpx calls in node UI for no real reason, then maintaining the tokens for the upstream API on the n8n side too.

This is where AIronClaw's shared MCP catalog earns its keep. The catalog is a list of MCP upstreams the platform operator pre-approves: a web search endpoint, a docs lookup, a Stripe shim, anything an agent commonly wants. You attach catalog entries onto your existing n8n proxy:

curl -fsS -X POST "${AIRONCLAW_URL}/api/mcp/<n8n-mcp-uuid>/shared-mcps" \

-H "Authorization: Bearer ${PAT}" \

-H "Content-Type: application/json" \

-d '{ "sharedId": "<catalog-search-id>", "enabledTools": ["search", "fetch"] }'

After this call, the agent's tools/list response on the same proxy URL includes your three n8n workflow tools plus search and fetch from the catalog. The bearer token configured on the catalog upstream is decrypted in-process by the gateway and never reaches your n8n install. Per-tenant rate limits, DLP, and the per-tool permission model from Scenario 1 all apply uniformly across native and catalog tools.

The catalog isn't a feature you'd build on top of n8n. There's no "import an MCP server from somewhere else" primitive in n8n MCP today; the unit of exposure is a workflow. A gateway gives you a different unit: an upstream catalog that any of your proxies can pull from. One n8n instance exposing three workflows ends up serving an agent that sees ten useful tools.

Putting an n8n MCP server behind a gateway

Five rules, one shared catalog, one diagram:

The pattern is the same one any production HTTP service evolves into: keep the application server focused (n8n is a workflow engine, very good at orchestration), and put the cross-cutting concerns at the edge. n8n shipped the protocol and the workflow surface. Authentication granularity, rate limits, redaction, caching, and a shared upstream catalog are exactly the layer a gateway is good at.

If you're running an n8n MCP server in production today, the bearer token is doing a lot of load-bearing work it wasn't designed for. The gap between "it works in dev" and "it's safe to leave running while you sleep" is closed by the things in this post.

What to try

- Read the Model Context Protocol specification if you haven't yet — the security boundaries are clearer once you've read the wire format.

- Try AIronClaw on top of an existing n8n MCP server. The five rules above are the ones to set up first.